- Blog

- Meshlab decimation

- Tommy pixen

- Print your brackets

- Amazon fire hd 10 plus

- Mozilla firefox free download for windows 8-1

- Freebsd mucommander

- Godocs iphone

- Beardedspice chrome

- Google hangouts for mac users

- Quentin quarantino planned parenthood fundraiser

- Strophes antistrophe episodes

- Kaspersky safe kids clean uninstall

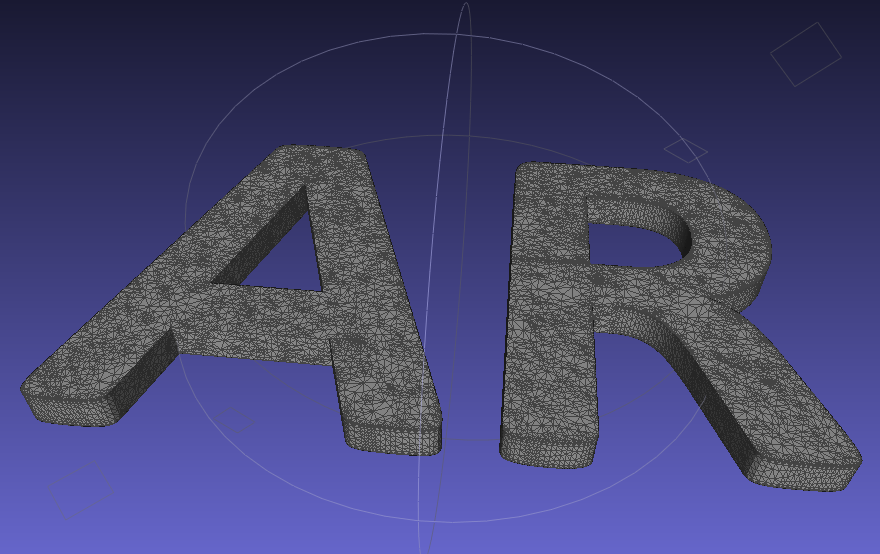

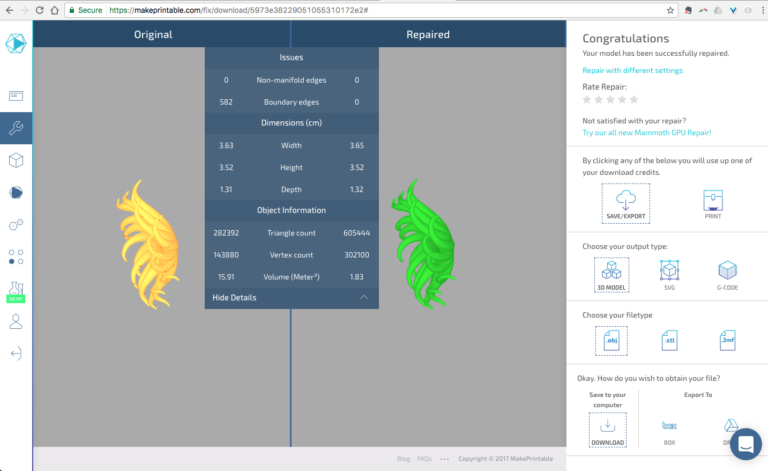

And there is a really detailed tutorial on how to do that. Next we use Blender to overlay a human skeleton-like armature over our mesh. File > Export Mesh As… *.ply format (or anything else Blender supports).And then use Quadric Edge Collapse Decimation with Simplify only selected faces checked. So here is what you can do: on the MeshLab toolbox panel select the tool called Select faces in a rectangular regions and select all, but the face. The last checkbox proved to be very useful: when you simplify you most probably would not like to simplify the face very much. I used Filters > Remeshing, Simplification and Reconstruction > Quadric Edge Collapse Decimation:

After that it is makes sense to simplify your model, you will not notice the difference visually and Blender will be much happier to work with smaller mesh.

I used Filters > Point Set > Surface Reconstruction: Poisson which seems to be the most poplar way to do it (it will take couple of minutes).

to MeshLab veterans: Good news MeshLab updated to a long-awaited new version in late 2016, and in the new version you can perform QECD multiple times in a row without crashing the. A new window will pop up with both models in view. For more detailed information, see the Shapeways Tutorial Polygon Reduction with MeshLab as well as Mister P.’s video Mesh Processing: Decimation. Then choose Point Based Glueing to select points by which to align the meshes. Once you do this, an asterisk will appear beside the base mesh. This sets one of the two point clouds as the base to which others will be aligned. Render > Show Vertex Normals allows you to see the normals, here they are: Once youve clicked Align, click on glue here mesh.So we invert them Filters > Normals, Curvatures and Orientation > Per Vertex Normal Function But we want our normals to look out, not in.Which resulted in (almost) all normals looking at the point (0, 0, 0) as we requested. First step was Filters > Point set > Compute normals with the following parameters.This operation is called surface reconstruction and can be easily done for example using MeshLab software: Triangles are better than points because once you have them you can compute lighting effects and give volume to your model. It all started in a device similar to this one: The scanner packed me into a point cloud with ~400,000 points, which looks like this:įirst we need to convert point cloud into a mesh: a graphical model, which consists of triangles. And today, to avoid doing all the things I really needed to do, I decided to go through the pipeline from a 3D scan to an animated model. Seems that Balancer also care about the vertex normal from hires model, that sounds perfect for nurbs poly reduction too (but I didnèt test it yet).įinally the process was nearly in real time (less than 1sec).So it happened that yesterday I did a 3D scan of myself. No tiny triangle, nice triangle balancing on detailled area. However, the triangulation is very unifrom, meaning less triangle where its actually needed (silhouette).ģDcoat also produce a lot of micro triangle, that can break the shading. The job is pretty nice, reduction take 2 sec or so. modo produce a lot of high valance vertex in this case, giving many shading glitches. I reduce it to 11k tris, using Modo, 3Dcoat export option and finally Balancer. So I start from a 3DCoat voxel model, 225k polys. Just did a quick test with Balancer, looks really great. I have to say its a really impressif piece of software.

- Blog

- Meshlab decimation

- Tommy pixen

- Print your brackets

- Amazon fire hd 10 plus

- Mozilla firefox free download for windows 8-1

- Freebsd mucommander

- Godocs iphone

- Beardedspice chrome

- Google hangouts for mac users

- Quentin quarantino planned parenthood fundraiser

- Strophes antistrophe episodes

- Kaspersky safe kids clean uninstall